TensorFlow Demos

Bidirectional LSTM

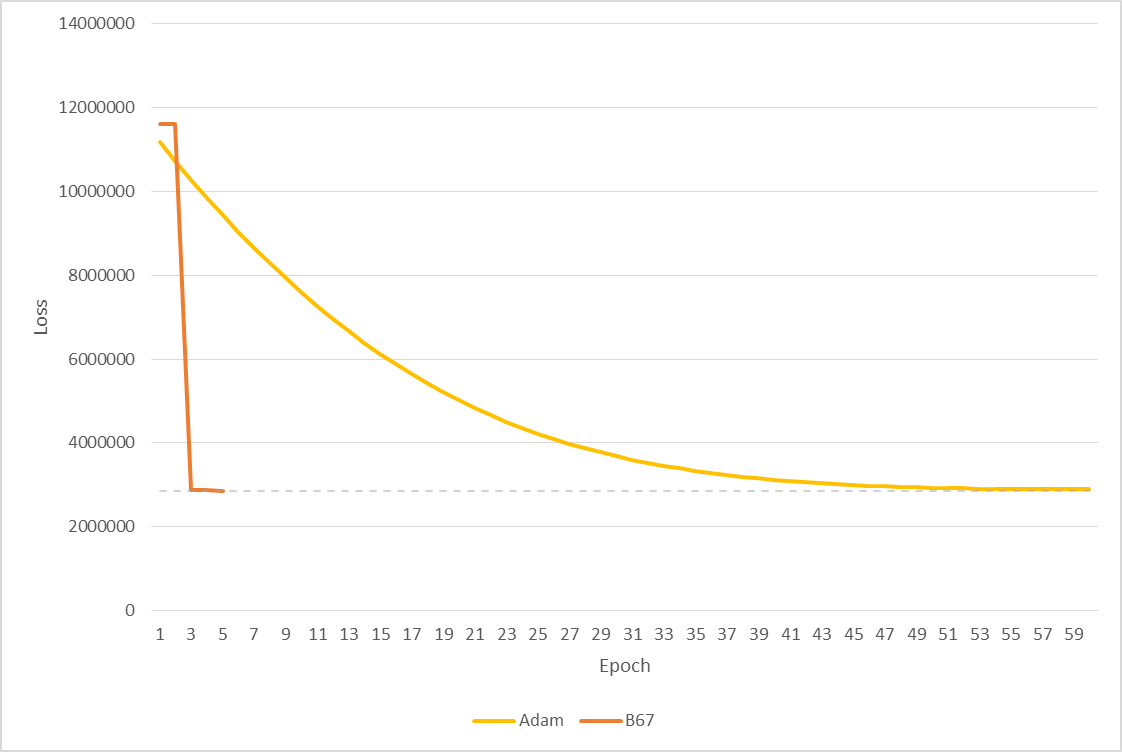

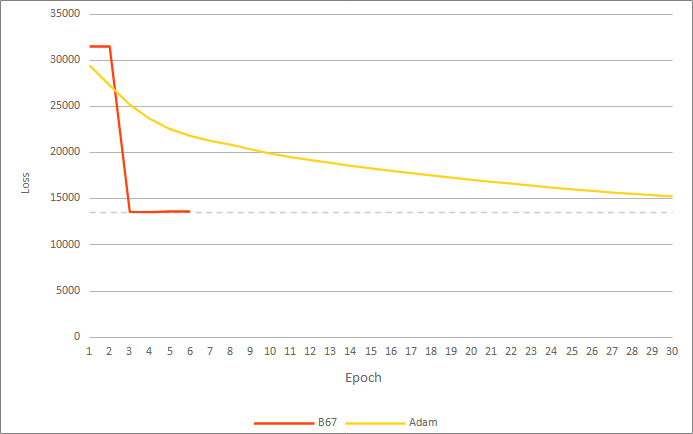

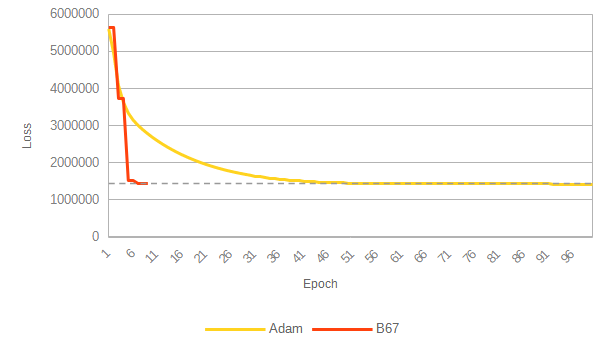

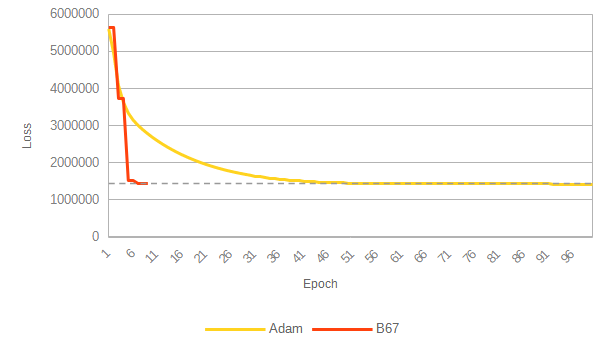

To demonstrate the performance of the B67 algorithm vs. Adam, a bidirectional LSTM neural network was trained on a random-number data set using both algorithms (see Network and Data Set).

B67's loss value after nine epochs was matched by that of the Adam algorithm after 90 epochs (see below). B67 ran for 32 minutes vs. 100 seconds for Adam due to the performance limitation incurred by TensorFlow's constraints on our basic demo implementation (see Performance Penalty). In a production environment, this performance penalty does not exist and the time per epoch would be comparable to Adam.

Result Summary

Results are shown below. A plot of loss vs. time is omitted as it is not representative of performance (see Performance Penalty).

Raw Data

Summary logs and screen output are available.

Demonstration Limitations

Performance Penalty

For this demonstration, B67 is implemented as a custom TensorFlow 'op.' TensorFlow does not supply B67's required inputs by default, and we are forced to explicitly recalculate the values that Tensorflow has already performed internally. This recalculation imposes a severe time penatly, and only allows B67 to perform a step once every two epochs. In addition, the custom op is currently implemented for CPU.

As stated above: in a production environment a B67 epoch would take roughly the same amount of time as an Adam (or any of the algorithms packaged in TensorFlow) epoch.

Test Setup

Network and Data Set

The network used is the one described in this Keras example without the sigmoid activation on the output node. Since the Keras example converges quickly, we use a random-number data set to better demonstrate relative performance:

- 25,000 samples are used with 200 elements per sample

- Inputs are random integers from 0 to 20,000

- Labels are random integers from 0 to 100

Randomness Minimized for Performance Comparison

The random seeds for Python, Python's numpy library, and TensorFlow are set to fixed values. In addition, shuffling of data sets is disabled.

Note that there still exist unavoidable variations in floating-point values due to non-deterministic ordering of parallel operations and aggregation of GPU calculations.

Loss function, batch size

- The loss function used is: .

- The batch size is 3500 samples (largest possible with available memory).

Adam

Adam was run with default parameters.

Software

- Ubuntu 22.04

- Python 3.10.6

- TensorFlow 2.10.0

Hardware

- CPU: Intel Core i7 8-Core 16-Thread, 2.9GHz Base 4.8 GHz Turbo

- GPU: NVIDIA GeForce RTX 3080 10GB

- RAM: 16GB (2x8GB) DDR4 3600MHz

- Hard drive: 1TB 3000MB/s SSD